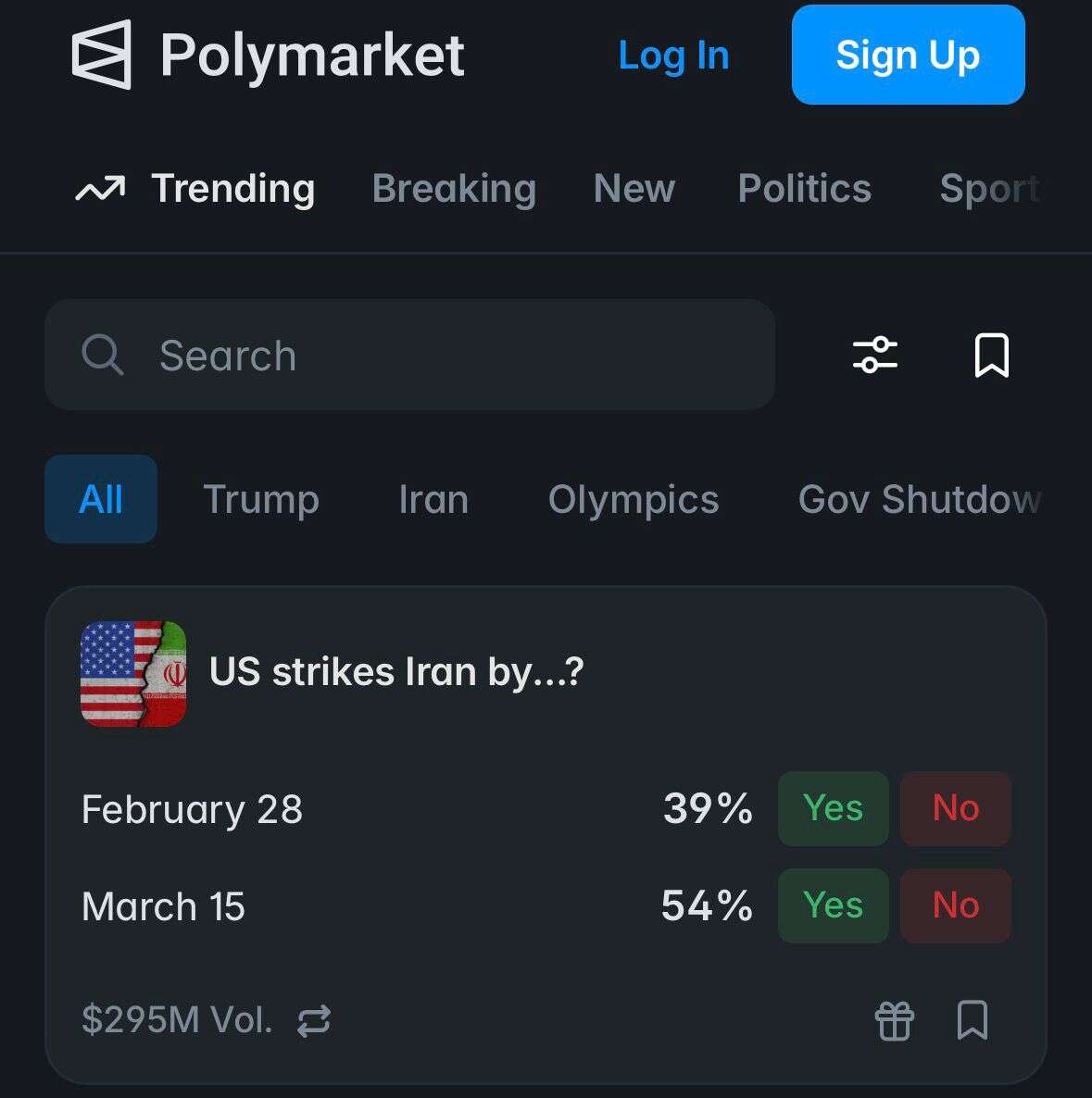

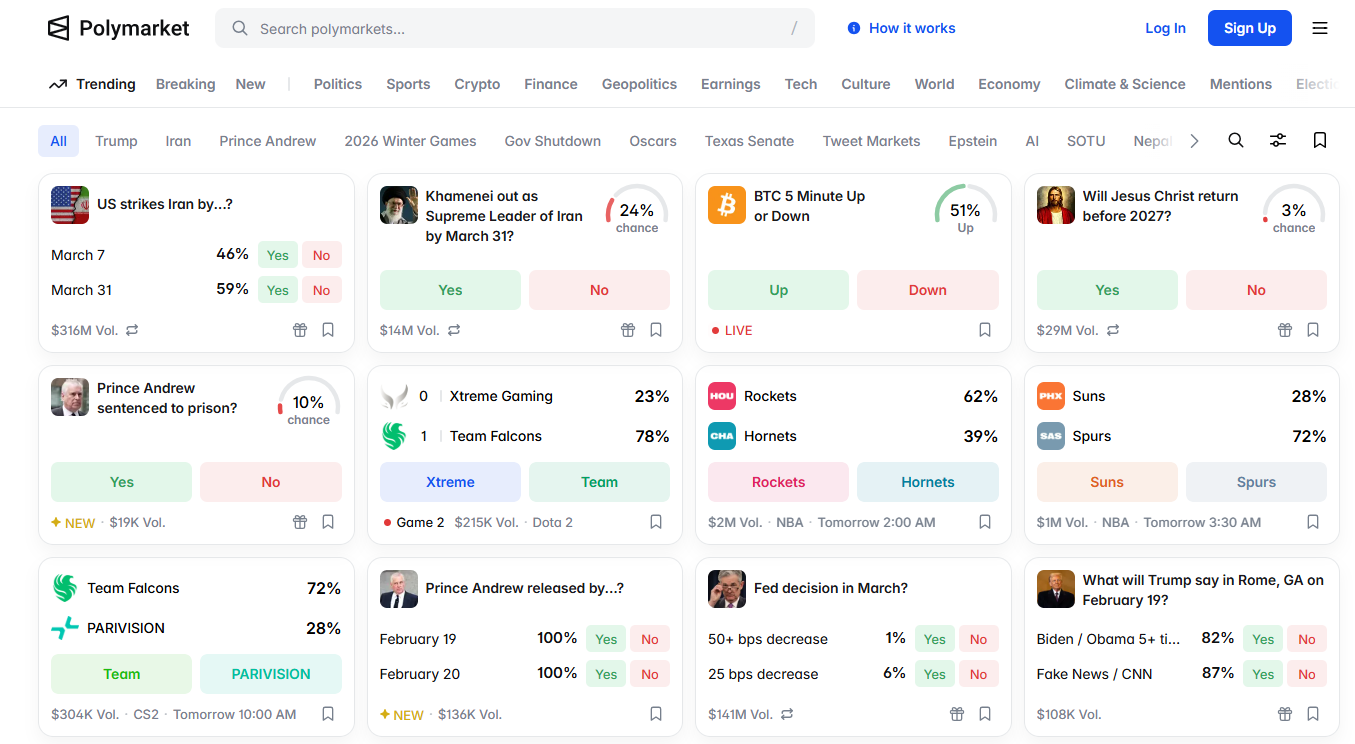

Will and when will the United States strike Iran? This is a question many of us are asking ourselves these days. While some pay close attention to White House statements while simultaneously trying to read between the lines, others rely on analysts or gut feelings. On the other hand, there are also those who simply open Polymarket and review what people are placing bets on.

Polymarket is a financial betting platform, where people can speculate, with real money, on the likelihood of future events: who will win elections, when a war might break out, or even when Taylor Swift will announce her engagement. Oftentimes, trends in the prediction market are seen as a barometer of public sentiment, a form of "collective wisdom." However, in the wake of several incidents, it appears that individuals with insider information tend to appear in particular situations, already knowing the result.

"Polymarket has shaken up the defense and government sector. It has assigned monetary value to information that previously had none. [In other words] it has become like insider trading in a public company," warns Itai Schwartz, co-founder and CTO of the cybersecurity firm MIND, in an interview with Israel Hayom; MIND develops solutions to prevent information leaks while working with government and defense agencies. "And it's only going to get stronger. It's a breach that draws in thieves/hackers."

The controversy surrounding prediction markets has recently intensified following several high-profile cases: internationally, reports surfaced of unusual bets placed ahead of significant political events, including the capture of Venezuelan President Nicolás Maduro. In Israel, an indictment was filed in a case alleging that security information was used for similar betting. The common denominator: information that previously had only intelligence value suddenly acquired an instant market value.

According to Schwartz, the mere existence of such a market changes the risk model: "Once operational information gains market value, a new type of motivation emerges. It's no longer just espionage or ideology. It's economics."

The defense sector is more vulnerable

The paradox, according to Schwartz, is that the most sensitive organizations are not necessarily the most safe-guarded against internal misuse of information. "Most absurdly, in the defense/security sector, the information most susceptible to leaks is that of security," he says. "There is a perception that we are in a closed network, no phones, everything is a fortress, so internal compartmentalization is deemed less important. But that's exactly where the risk is greatest."

Unlike banks and public companies, where all access to information is recorded, it is much harder to track internal usage in security organizations. "In the financial sector, because of regulations, there is far more oversight and documentation of who has access to what and when. In defense/security agencies, there is less external supervision, making real internal compartmentalization difficult to enforce."

According to Schwartz, prediction markets make this gap particularly dangerous: "Polymarket assigns immediate monetary value to information that used to be pure intelligence, only without the controls that the financial market has developed over decades." Only after a series of leaks involving sensitive data and intellectual property did things start to shift: "The penny dropped. Both defense organizations and manufacturers understand that the threat now comes from within."

Not a "regular" cybersecurity company

MIND was founded by Eran Barak, one of the founders of Hexadite (which was acquired by Microsoft), together with Itai Schwartz and Hod Bin-Noon. It is headquartered in Seattle, with its development team in Israel. Moreover, the company employs around 55 people and plans to double its size this year.

Despite being classified as a cybersecurity company, MIND's approach differs from industry norms: it doesn't focus on external hacks but rather on internal use of information. The company develops a platform to protect organizational data in a world where information is spread between employees, the cloud, and various AI tools. At the heart of the system is an engine that automatically classifies what information is sensitive to the organization and analyzes behavioral patterns-for example, an employee suddenly accessing unusually large amounts of financial documents, or sending a large volume of data to an unusual recipient-and raises real time alerts.

"We look at the interface between employee and information," explains Schwartz. "Who accesses what, in what volume, and in what context."

What employees are really doing

As a platform that monitors employees' use of internal organizational information, it provides insight into phenomena previously unknown in traditional cybersecurity. "Salespeople leaving the company often take customer lists; it's far more common than people think. We even saw an employee transferring massive amounts of data from the corporate email directly to a competitor."

The company works with large organizations, ranging from software and internet firms to financial institutions and industrial companies, as well as infrastructure and telecommunications providers-sectors that require strict oversight of exposure to sensitive information.

One particularly unusual case occurred at a large organization: "They hired an admin who passed all the interviews, but the person who started working was actually someone else. We discovered that the employee didn't match the record of the person who had been hired. It was fraud."

The chatbot era

If up until now the focus was on intentional leaks, the AI revolution adds a new layer of risk, one that stems directly from everyday work habits. The widespread use of AI tools at work leads employees to share organizational information to streamline tasks, process data, or draft documents, without realizing the potential consequences.

"Employees upload sensitive documents to their private chats without understanding that the information is leaving the organization," says Schwartz. "Developers do it to speed up work—sending code, design documents, or data without pausing to consider the risk."

The danger is not only that the information is transmitted outside the organization once it is entered into a chat tool, but that it can be stored and influence the system's future responses. "For example, if engineering plans for a vehicle are uploaded, they could become part of the model. In some cases, AI companies use conversation content to train their systems. We had a client whose internal product satisfaction survey, information that was meant to remain within the company, later appeared as part of a chatbot's response to a question about that same product."

Just in recent days, a case arose in the United States, in which a senior official in the government cybersecurity apparatus uploaded sensitive documents to a public chatbot as part of their routine work.

Thus, between prediction markets that assign monetary value to intelligence information, and chatbots that can turn innocent sharing into a potential leak, organizations must adapt to a new reality - whereby the main risk is not necessarily an external attacker, but the internal user.